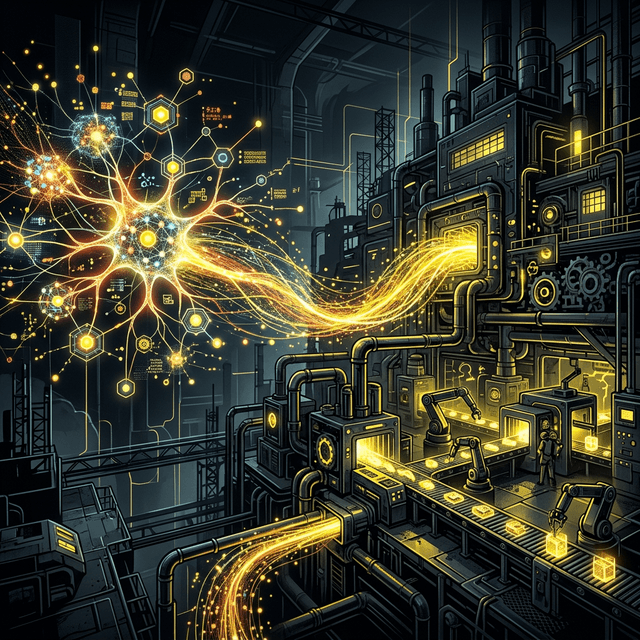

The critical components of an MLOps pipeline that ensure your AI models deliver consistent value in the real world.

The 90% Problem

Industry surveys consistently find that approximately 90% of machine learning models that reach prototype stage never make it to production. The data scientists who built the model are often shocked by this statistic — the model worked beautifully in the notebook. But the notebook is not the product. The product is a reliable, maintainable, monitored system that serves accurate predictions at scale, updates as the world changes, and degrades gracefully when it encounters inputs it was not trained on.

The MLOps Stack

MLOps is the discipline of applying DevOps principles to the machine learning lifecycle. It comprises five core capability areas: data management, model training pipelines, model registry and versioning, model serving infrastructure, and model monitoring.

Data management involves ensuring the quality, lineage, and accessibility of training data. We use Great Expectations for automated data validation and Apache Airflow or Prefect for pipeline orchestration. Model training pipelines should be reproducible, versioned, and executable on-demand — not a collection of notebooks that a specific data scientist runs on their laptop. MLflow or Weights & Biases provide experiment tracking and model registry capabilities.

From Training to Serving

Model serving — exposed a trained model as an API that can be called at inference time — is where most MLOps implementations struggle. The key decisions are: online vs batch serving (does the application need a real-time prediction or can it consume a pre-computed batch?), the serving framework (Triton Inference Server for GPU-accelerated workloads, Seldon Core or BentoML for more flexible serving), and the scaling strategy.

For models that receive highly variable traffic, serverless inference — where the compute resources scale to zero when idle and scale out rapidly on demand — is economically attractive. AWS SageMaker Serverless Inference, Google Cloud Vertex AI, and Modal are leading options in this space.

Model Monitoring: The Often-Neglected Foundation

A model deployed without monitoring is a liability. Machine learning models degrade over time as the real-world distribution of inputs drifts away from the training distribution — a phenomenon called data drift or concept drift. A model trained on pre-pandemic purchasing behaviour will make increasingly poor recommendations as consumer habits shift.

A robust monitoring pipeline tracks three categories of signals: data quality metrics (are inputs within expected ranges? are there nulls or format changes?), prediction distribution metrics (is the distribution of model outputs shifting?), and business outcome metrics (is the downstream business metric the model is optimising for holding up?). Tools like Evidently AI, Arize, and WhyLabs provide purpose-built monitoring for ML systems.

Continuous Training and the Feedback Loop

The most mature MLOps implementations implement continuous training: the model is automatically retrained on a schedule (or triggered by a drift signal) using a pipeline that pulls fresh labelled data, trains the model, evaluates it against held-out test sets, and if it passes quality gates, deploys it to production via a canary or blue-green strategy.

The feedback loop — collecting labels for model predictions so they can be used for future training — is the most valuable investment in the long-term health of an ML system. Implementing a labelling workflow, even an imperfect one, closes the loop and enables the system to continuously improve.